- Blog

- The silence taiwanese drama

- What is solarwinds orion

- Netcat windows reverse shell

- Human centipede 2 uncut baby

- Eml to pst converter mac

- Buy blitzkrieg 3

- Nfs hot pursuit pc mega

- Let it be the beatles with billy preston keyboard solo

- Insert drawing from chemdraw into mestrenova

- Adobe illustrator remove background

- Free download styles for yamaha psr s910

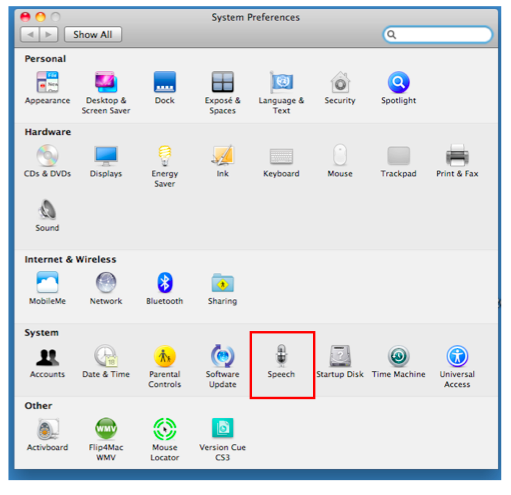

- Mac tts voices

- Treasure planet battle at procyon download full game free

IBM had been a pioneer of NLSR dictation products, with its VoiceType and subsequent ViaVoice systems primarily for PCs, but it was MacSpeech iListen that fired enthusiasm among Mac users. NLSR also allowed Mac users to control their computers using voice commands, but not dictate text to assemble documents. When later Motorola 68K-powered Macs became available with digital signal processing (DSP) hardware, MacinTalk became far more usable and less of a comical novelty. By comparison many of the current crop of TTS products seem as robotic as the voice of Star Wars’ R2-D2.Īpple’s own speech products were launched with the very first Mac, in the form of MacinTalk, a software-based speech synthesis system which could perform fairly crude TTS.

It is perhaps salutory to look back around 35 years to the Texas Instruments 99/4, one of the first home computers, whose Speech Synthesiser was far more advanced and capable of more natural speech output that today’s general-purpose computers. At various times the subject of wild claims about huge and revolutionary advances, both are currently viewed as niche applications, and are often omitted when considering mainstream input and output techniques. These are generally known as text to speech (TTS) and natural language speech recognition (NLSR), respectively. Viewed primarily as ‘assistive’ technologies that can facilitate computer use by those with physical impairments, Macs can work with speech in both directions, either to convert written text into spoken words, or to transcribe your speech into written text. Each major release of OS X has boasted improvements in text to speech, and speech to text, but do they yet match the abilities of a human? Meaningful and natural speech remains a uniquely human attribute.